Monetizing a Defect

How Anthropic's pricing structure turned a known model limitation into a billable feature, and what it signals about the AI subscription era.

Forgive me for a moment while I rant.

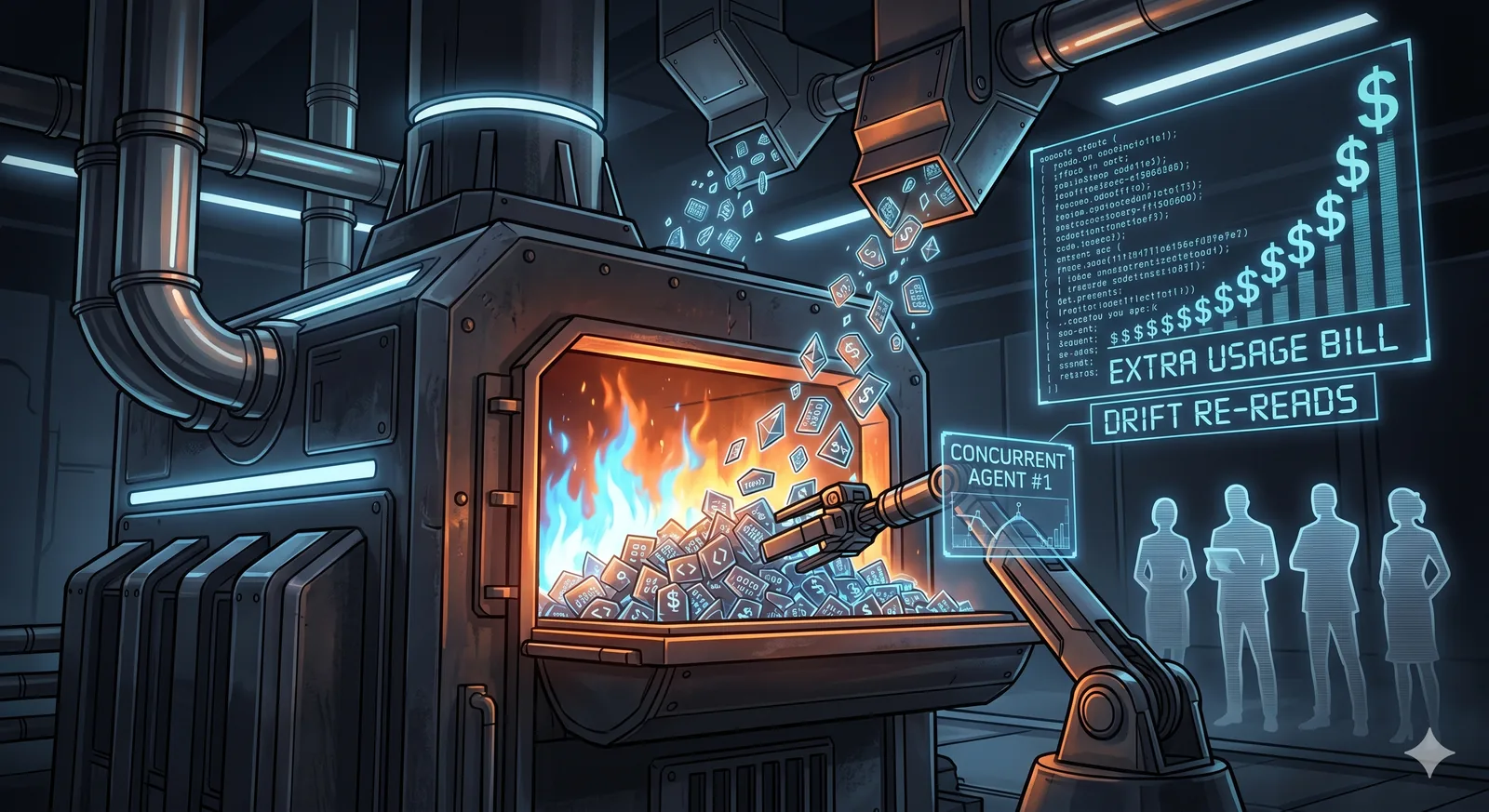

I’m a solo founder running a pre-revenue AI infrastructure company. This week I burned $100 in three hours on Claude Code “extra usage” doing routine work. I want to walk through what happened, because the pricing structure that produced that bill is not an accident, and the pattern is showing up across the industry.

For context, I was running Claude Code on a Max plan with extra usage enabled, working through architectural changes across a moderate-sized codebase. I had Opus 4.7 selected with the 1 Million token (1M) context variant. Three hours and roughly $100 later, I shut it down.

I can already hear the collective internet nodding sagely and pointing:

“Well there’s your problem.”

I’ll answer the natural question: why was I using 1M context at all? Because of drift. Every frontier model degrades over long sessions. If you’ve engaged in a complex project you’ve likely seen the decrease in quality that happens as the agent loses track of constraints established earlier. Uncovering these problems is often time consuming and problematic; some time later it becomes clear: once again served scrambled eggs when we asked for an omelet. This is no surprise to the vendor. The 1M context window is the marketed mitigation: keep more in view, lose less detail to compaction, fewer drift events. This is how we’ve been told to resolve this issue, and I can see why.

So far this is just Ferengi. Here’s where it gets Romulan.

The Sonnet Asymmetry

Per Anthropic’s support docs and the documented behavior, Opus 4.7 with 1M context is included on Max, Team, and Enterprise plans. By contrast, Sonnet 4.6 with 1M context requires extra usage on every plan except usage-based Enterprise.

Sonnet costs less per token to serve than Opus, making it the preferred volume model. But there is no engineering reason 1M Sonnet should cost the user more than 1M Opus on the same plan. The asymmetry exists because Sonnet is the volume model, notably the one Anthropic recommends as the everyday default, and metering its 1M variant at subscription rates would cost Anthropic more in aggregate than gating Opus 1M.

The result, from the user’s perspective: the model recommended for routine work has its drift mitigation paywalled. The drift exists in the cheaper model. The fix for the drift is gated behind metered billing.

And just like that, defense against a defect in the product becomes a paywalled feature.

I’m not claiming intent. I’m describing structure. The structure was carefully modelled and reviewed before it shipped. Companies at this scale don’t release pricing tiers without finance, product, and legal review: they knew exactly how this would play out. Whatever the motive, the architecture is intentional.

I assert this is intentional because it isn’t a single decision in isolation; it’s a pattern of decisions starting in March:

March 26: Anthropic adjusted five-hour session limits during peak weekday hours (5-11 AM PT). Weekly totals officially “unchanged but redistributed”; ~7% of users hit session limits they would not have before, particularly on Pro.

March 27-28: The Claude Code /model selector was reverted from showing Opus 1M as “included” on Max plans back to “Billed as extra usage.” Multiple GitHub Issues documented this regression. The change contradicted an email Anthropic sent Max subscribers a week earlier that explicitly told them 1M context was now included.

March 31: Anthropic acknowledged users were hitting usage limits in Claude Code far faster than expected and called it a top priority.

April 4: Third-party agent frameworks were blocked from Pro and Max subscriptions and pushed to pay-as-you-go or API keys.

April 21-22: Claude Code was briefly removed from Pro entirely, then reversed under backlash. Anthropic’s head of growth characterized the changes as small adjustments and noted that usage had changed and the current plans were not built for it.

The trajectory is consistent: a subscription product mispriced for sustained engineering use, adjusting under pressure, pushing cost back onto the users who built workflows on the original terms.

This pattern is not limited to Anthropic. We’re seeing an industry trend pushing costs up across the board.

GitHub Copilot announced on April 24 that all plans transition to usage-based billing on June 1, 2026. Pro stays $10/month with $10 in AI Credits. Pro+ stays $39/month with $39 in credits. Both are metered against token consumption at API rates from June 1 forward. GitHub also paused new Pro and Pro+ signups on April 20; evidently they’re at capacity too.

GitHub used a different word for the same shape of change. Anthropic adjusted limits and gated mitigations. GitHub eliminated the subscription model entirely. Both push the variable cost back to the user.

The economics of frontier inference don’t support the early subscription pricing. Every lab and every reseller will face the same pressure. Copilot’s June 1 conversion is the explicit version. Anthropic’s April adjustments are the implicit version. The direction is the same.

What It Means in Practice

The implicit contract of predictable monthly cost for sustained engineering use has broken. The operational response I’ve adopted:

- Treat AI subscriptions as a utility you invoke deliberately, not a substrate you build on. The substrate is too unstable to build on right now.

- Own the hardware where you can. A workstation-class GPU pays back inside two months at current extra-usage rates for sustained heavy use. The MBA-school heuristic of “rent, don’t buy” assumes a stable rental market and variable utilization. Neither of those conditions currently holds.

- Run open-weight models locally for the high-volume routine work. Reserve the frontier APIs for the moments where the capability differential is real and worth paying for explicitly.

- Keep extra usage off by default. The feature exists to bill your credit card without warning, and the threshold for accidental high spend is hours, not days.

The Sonnet-1M paywall is not the largest dollar amount in this story. It’s the most diagnostic. When the recommended remedy for a known model limitation is metered separately from the model, the pricing structure is no longer aligned with user outcomes. It’s a signal about which way the relationship is moving.

Build accordingly.